Tesla hasn’t released an Autopilot safety data report in about a year. It’s not clear why, but it is disappointing as the company is being opaque with its self-driving data while missing timelines to deliver on its promises.

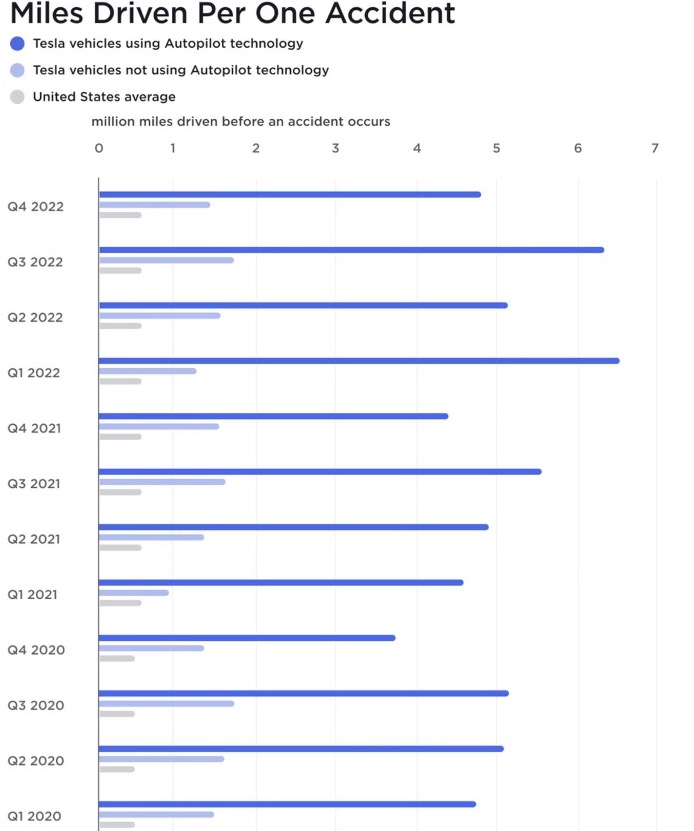

Since 2018, Tesla has been trying to create a benchmark for its improvement in Autopilot safety by releasing a quarterly report that compares the number of miles per accident on Autopilot versus off Autopilot. The data was always limited and criticized for not taking into account that accidents are more common on city roads and undivided roads than on the highways, where Autopilot is most commonly used.

However, it was still helpful to compare it against itself over time and see if there were any improvements, and there were some incremental improvements at times. But then Tesla suddenly stopped releasing those quarterly reports in 2022 without any explanation.

In January 2023, the company released reports again for the first three quarters of 2022. A few months later, the company released the Q4 report, but since then, it has once again stopped releasing data, making the latest data almost a year old.

It’s not clear why Tesla is no longer releasing the data quarterly as it had done for years.

For the last few years, Tesla hasn’t had a press relations team in the US to ask them questions like, “Why haven’t you released an Autopilot safety report in almost a year?” We have to speculate.

Electrek’s Take

As we previously reported, the data is far from perfect because Autopilot is primarily used on highways. Meanwhile, the NHTSA data is for accidents everywhere and includes data from all vehicles, including older vehicles without maintenance, which are more likely to be involved in accidents than newer vehicles like Teslas.

It’s possible that Tesla found the report to not be that useful, but it was still helpful to track against itself and see improvements over time. Another explanation is that there have been no improvements over the last year and that Tesla could be trying to hide that.

That’s a real possibility, especially considering Tesla’s history of trying to be very opaque about its Autopilot and FSD Beta data.

While we had very good access to self-driving data from programs by Waymo, Cruise, and others, by way of the California DMV’s self-driving testing oversight, Tesla has managed to avoid being included in that by arguing that its FSD beta, which stands for “Full Self-Driving Beta,” is not a self-driving test program but a level-2 assisted driving system.

Tesla’s unwillingness to be more open to releasing data is concerning. Instead, CEO Elon Musk has often simply suggested that people watch videos of FSD Beta drives to keep track of progress, but that’s a very limited dataset.

It is also a problem that the most popular videos, including the ones promoted by Musk and the Tesla community, often make FSD Beta look its best.

When talking to a broader array of FSD Beta testers, you will get a much wider range of opinions than what you see with a YouTube search. The consensus is that Tesla’s computer vision system is truly impressive and that the driving behavior is good. However, it still often makes dangerous mistakes, and the path to a level-4 or level-5 self-driving capability is less than clear.

That’s why it would be nice to have some data to track and see improvements over time toward Tesla actually delivering on a promise it has been making to new buyers since 2016.

Why no data, Tesla? Why?

FTC: We use income earning auto affiliate links. More.